ARKIVIST

Proof is the new intelligence.

Your models generate. Arkivist verifies. Every AI decision is decomposed into claims, tested against sources and logic, and anchored to a public ledger you don't own and can't rewrite.

AI moved from answering to deciding.

A generation ago, the AI question was “can the model answer?” Today, models triage alerts, deny claims, flag transactions, draft contracts, and recommend treatment paths. The model isn't the assistant anymore — it's the decision-maker.

That changes the question.

Why did it decide that?

What did it rely on?

Can we prove it was right?

Today, most companies cannot answer.

Between the model and the outcome.

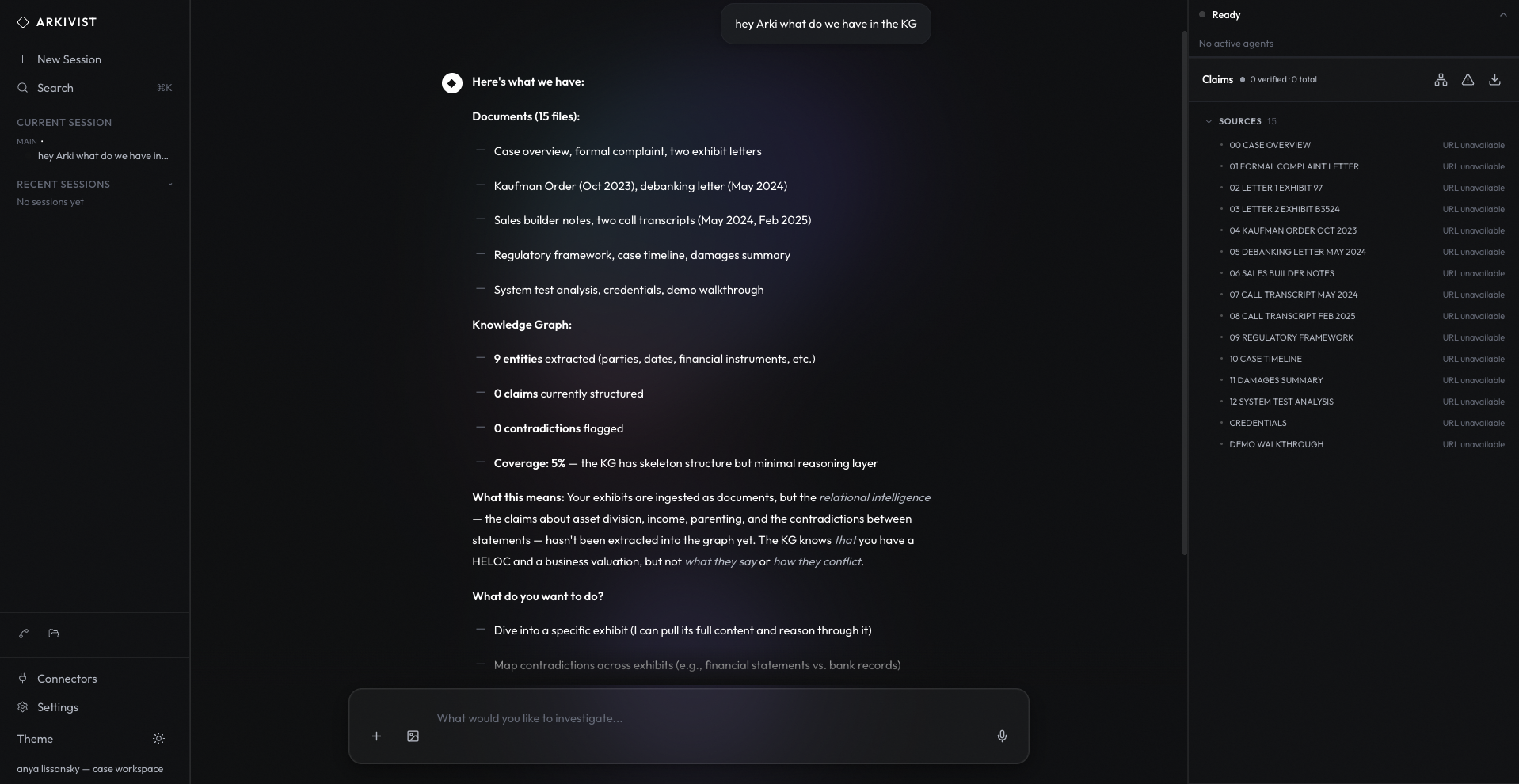

Arkivist is the verification layer for AI decisions. The model produces output. Arkivist decomposes that output into testable claims, validates each one against your sources and your logic, and writes the result to a public ledger anyone can audit.

The output your team ships is no longer “the model said so.” It becomes a record an enterprise can inspect, challenge, audit, and defend — every claim sourced, every contradiction surfaced, every decision provable.

Here's how, in three steps ↓

Decompose. Verify. Anchor.

01

Decompose

Every AI response is split into discrete, testable assertions — who, what, when, on what basis. Nothing is treated as monolithic. Nothing escapes review.

02

Verify

Claims are checked against your knowledge graph, your authoritative sources, and each other. Contradictions are surfaced, not hidden. Calibrated agents — not the same model that generated the output — make the call. Confidence is earned, not asserted.

03

Anchor

Every verified decision is hashed and anchored to the Hedera Consensus Service — a public, governed ledger we did not build and cannot rewrite. The proof exists outside Arkivist. Anyone can verify it. We can’t take it back.

“Most AI tells you what it thinks. Arkivist proves why.”

Arkivist is live.

Not a roadmap. Not a thesis deck with a demo. A running system, anchored on a public ledger, publicly verifiable from outside Ubiship.

1.3M+

AI decisions cryptographically anchored to Hedera Consensus Service.

Less than a coffee

Total infrastructure cost to anchor every one of those 1.3M proofs.

2800+ Elo

Arkivist’s adversarial chess arm, playing live on Lichess. Anyone can watch.

Chess isn't the product. It proves the architecture.

And it isn't one benchmark.

CHESS

Lichess, public games, >2800 Elo, ongoing

CYBERSECURITY

1,500+ OSS-Fuzz exercises, multi-turn defensive agent

ARC-AGI

ARC-AGI benchmark, MCTS planner with retrospective learning

LEGAL REASONING

Canadian Income Tax Act and all 956 federal statutes

Hedera Anchor · Testnet

Canadian Federal Corpus

Testnet today. Mainnet this cycle.

Don't trust ARKIVIST. Check the ledger.

“This is not a point solution. It is a decision protocol.”

Autonomy crossed a line.

In April 2026, Johns Hopkins published research showing that AI agents fail when authority conflicts — not at the edges, at the core. Meanwhile, the same systems are already deciding things in production.

AI is crossing from assistive to autonomous.

Autonomy creates liability.

Liability demands proof.

The forces are converging. The questions are already on someone's desk in your organization.

Where the proof shows up.

For your operators

Every output your team acts on arrives with a decomposed claim ledger. The work is shown. The contradictions are surfaced. “The model said so” stops appearing in their queue.

For your auditors

Every AI decision your organization has made becomes a record — replayable, verifiable, anchored to a ledger that doesn't take instructions from your CEO. You walk into a regulator's office with a system, not a story.

For your board

A single, signed answer to a single question: are our AI decisions defensible, as of this second, yes or no? Not a quarterly survey. Not a vendor's word. A cryptographic state.

Let's see what it does on yours.

The next AI race is not who generates the most convincing answer.

It is who can prove the decision.

Proof is the new intelligence.

Arkivist is how you prove it.